A Breakdown of YouTube and Twitter/X

Some platform companies are trying to decrease or even eradicate misinformation from their platforms. Join me while I explore Twitter/X, a social media platform that I regularly use and YouTube the popular video site that seems to have my children captivated.

Today most social media platforms are fighting a war between free speech and preventing harm. While many of them have made progress, the reality is that misinformation spreads fast and is nearly impossible to stop.

Twitter/X – “Freedom of Speech not Reach“

Twitter/X focuses on limiting how far misinformation can spread versus removing content. Because of X’s policy regarding “freedom of speech, not freedom of reach”, posts can typically remain on the platform but, visibility may be reduced or downranked if it’s considered misleading.

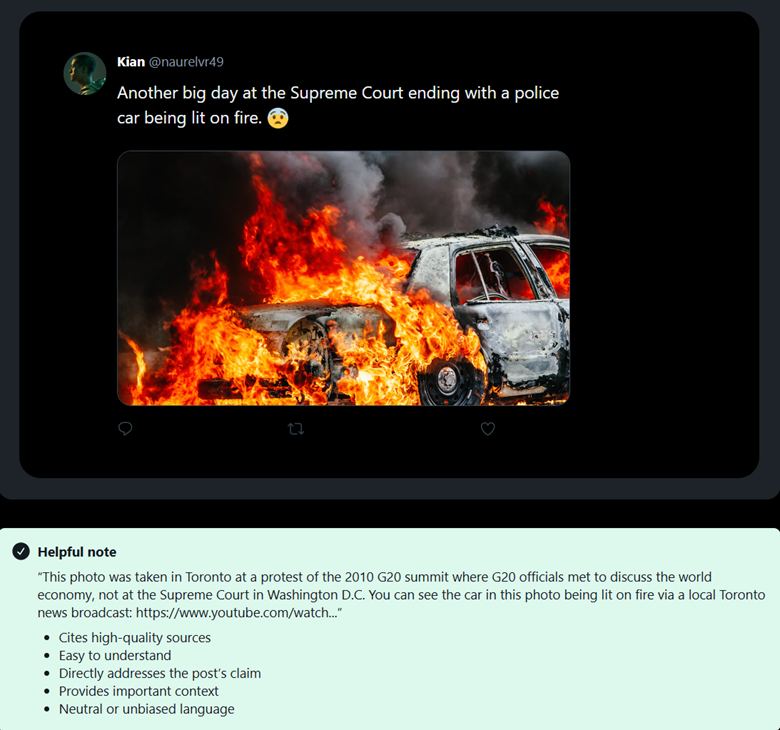

One of the ways that X/Twitter combats misinformation is through Community Notes. Community Notes a are collaborative, community-driven notes from contributors that add context, fact-checks or clarification to posts.

What Are X / Twitter Community Notes And How Do They Fight Disinformation?

While community notes have not dispelled all instances of misinformation, they have reduced the number of times misinformation is reposted, led to more content being deleted by the original poster once the post receives a community note and users perceive community notes as trustworthy.

Another way that X protects users against misinformation is through its policy framework by limiting the spread, rather than removing content outright. Under its approach of “freedom of speech, not freedom of reach,” posts that may be misleading can be downranked, which means they are less likely to show up in people’s feeds or in search results. This helps reduce how quickly misinformation spreads and how far it can reach.

X’s terms of service and policies outline what is allowed and how content is handled, focusing more on user responsibility and platform transparency. The “X Rules” don’t necessarily stop misinformation but they target misinformation when it falls into specific harmful categories, like misleading or deceptive identities or synthetic or manipulated media.

Finally, Twitter/X can take action against accounts that repeatedly share harmful or misleading information. X limits their reach by temporarily restricting features or even suspending accounts for more serious infractions.

Overall, Twitter/X’s framework works to give users more information and slow the spread of misinformation, while still allowing open conversation and freedom of speech.

YouTube – A Top-down Approach

YouTube takes a different approach when it comes to handling misinformation. YouTube is more on the side of platform control versus freedom of speech. On the flip side from X, YouTube will actually remove content that violates its policies.

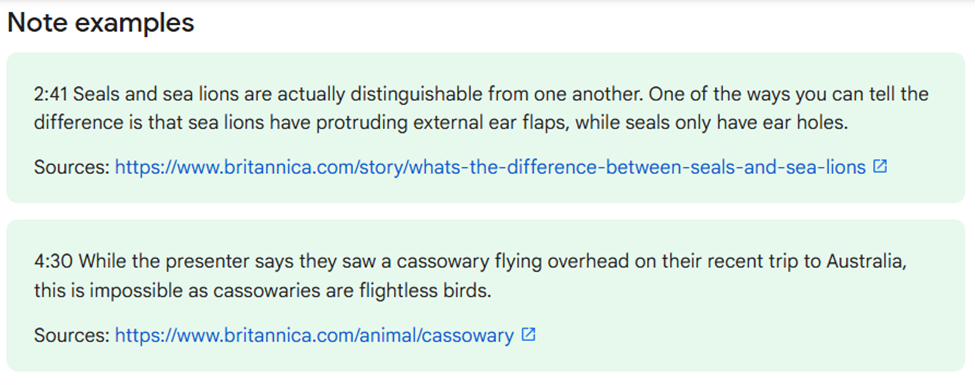

In 2024, YouTube started using a community notes-like feature to combat misinformation similar to what Twitter/X uses. Like X, users on YouTube can write notes on videos that they find are inaccurate or unclear.

Write notes on videos – YouTube Help

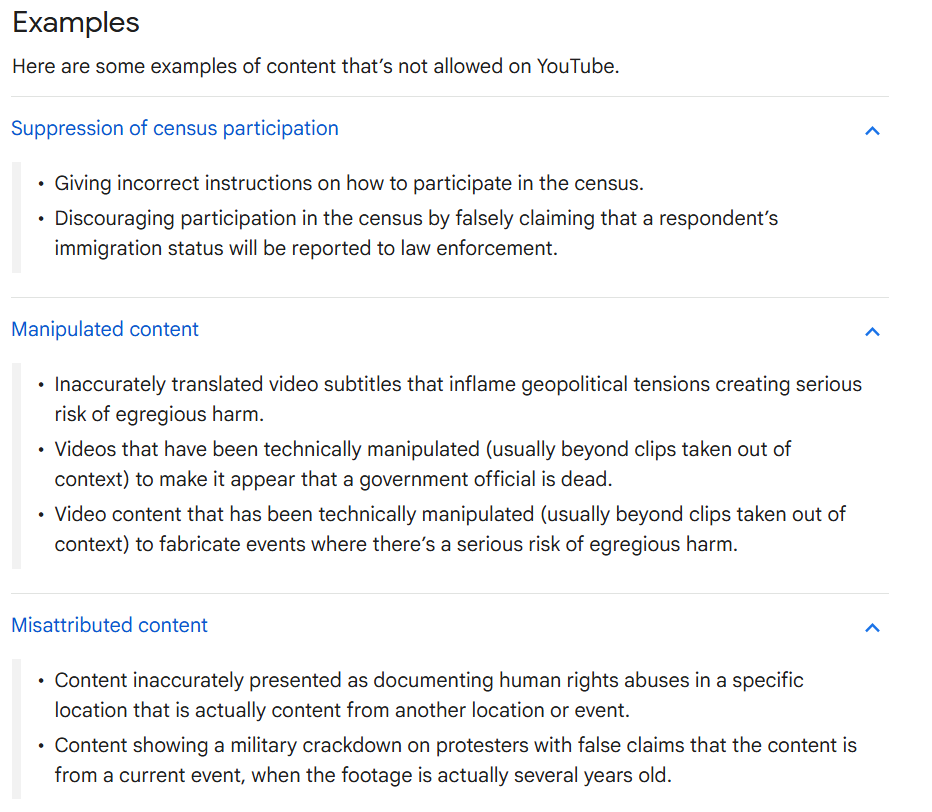

YouTube has a more top-down system where the company plays the primary role in deciding what stays and what goes. According to YouTube’s policies, the platform takes a more direct approach by removing content that is deemed misleading or harmful. This includes videos that are doctored, false claims tied to major issues or old video footage being passed off as something new.

Misinformation policies – YouTube Help

YouTube also works to limit the spread of misinformation by adjusting what shows up in recommendations and search results. On top of that, it uses strikes and can remove channels for repeat offenders. However, content that might violate policy may get an exception if it falls under EDSA (Educational, Documentary, Scientific, or Artistic) because it’s intended to inform or explain.

YouTube feels more controlled. From my perspective as a dad with kids who are watching clips and reels all the time, I feel like YouTube does a few things right. The dangerous stuff usually doesn’t stick around long, and when you search for something, the results tend to be pretty reliable. My 4-year-old can search “Caleb Monster Truck” and nothing weird pops up. It also feels harder for false information to take over. But YouTube is not perfect. A lot of context gets lost in short clips. Some misleading content doesn’t get removed at all, it just sits there, and the EDSA exception can be a gray area where questionable stuff stays up. I’ve seen clips get shared that aren’t technically fake but are still misleading because they don’t share the whole picture.

An example of this is the viral soccer clip that made it look like Chelsea’s Conor Gallagher ignored a young fan. The video spread quickly and got people fired up. Eventually the full interaction was shared, which told a different story. The clip wasn’t fake and it didn’t break any rules, but it lacked context and painted a picture that wasn’t true.

Bottom Line: It’s Not Just the Platforms

Both Twitter/X and YouTube are trying to solve the problem of misinformation. They are going about it in different ways. X tends to keep content up and try to slow its spread, while YouTube is more hands on in controlling what users see by removing content. X can feel too reactive because misinformation spreads quickly and only after does a correction come. YouTube still allows misleading content if it doesn’t clearly break the rules but, it still feels more controlled.

Misinformation isn’t just a platform problem, it exists everywhere. Information (misinformation) moves quickly and is slow to be corrected. Policies don’t capture everything and with so much usage, it’s impossible for these platforms to keep up. As a society who consumes massive amounts of social media we need to work on slowing down and asking questions. We cannot take everything at face value. Simply checking a source or looking for context can make a huge difference in how misinformation spreads. It’s up to us as the consumer to develop healthy habits online.

Leave a Reply